The AI metric that actually matters — and it's not adoption

Wharton Business School just published a paper about the use of AI that should make all of us stop and think. Especially those of us selling advice and consultancy for a living.

Researchers at Wharton tested what happens when people use AI to help answer questions — while quietly feeding the AI wrong answers half of the time. Those being studied followed the AI's advice 80% of the time even when it was wrong. Worse, their confidence went up when they used AI, regardless of whether they were right.

To understand why this is happening, it helps to know a bit of behavioural science. It’s long been accepted that people have two operating levels. System 1 is fast, instinctive, gut-feel thinking. System 2 is slower, deliberate, and analytical.

Now the researchers are proposing that we have a System 3 as well — artificial cognition. External, automated, and increasingly the thing we reach for first. The problem is that System 3 can bypass the other two entirely. You stop forming a judgement and just adopt the AI's output as your own.

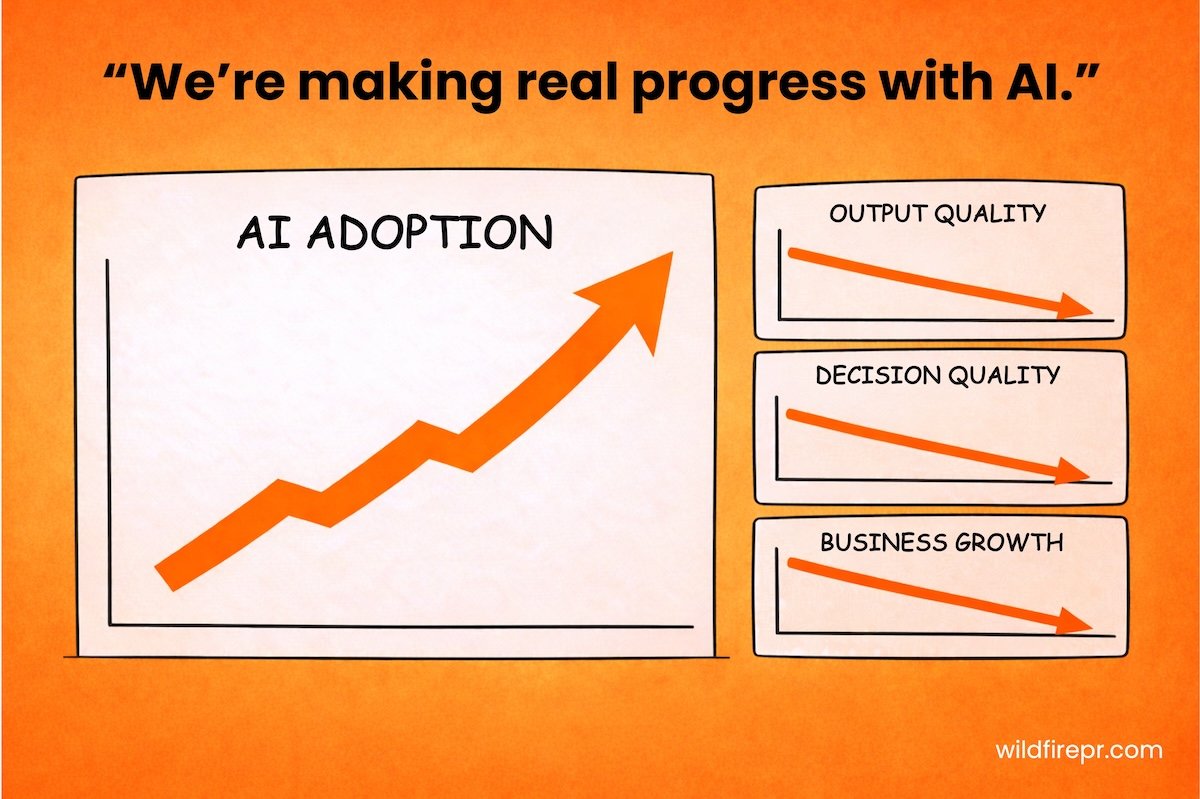

What’s interesting is that the big consultancies, like Accenture and KPMG, are now measuring AI adoption among their consultants and using data to inform performance reviews, warning that regular adoption is a requirement for those seeking promotion. And while it’s clearly important that we’re taking advantage of the benefits that AI can bring us, this research highlights the obvious pitfalls of striving for AI adoption over AI fluency.

The clever folk at Wharton called this over reliance on AI cognitive surrender.

And yet every industry report or agency award entry that I’ve been judging recently includes some version of the same stat — what percentage of employees are using AI and how many hours it's saving. But is this adoption actually leading to us thinking better, advising better, or making sharper decisions? Or are we just taking cognitive shortcuts?

Whether you're in PR, strategy, law, finance, whatever — your value is your judgement. Not your ability to generate output at speed. What clients are actually paying for is someone who has the experience and judgement to critically assess a situation.

Would you accept an analysis from a junior colleague without reading it? Would you sign off on a strategy because someone put it in a deck and it looked confident? Would you take a brief at face value without pushing back on it?

No. Because scrutiny and critical thinking are fundamental to our jobs.

So why are we treating AI outputs differently?

The research found that people with higher trust in AI and lower need for cognitive effort were most likely to surrender to it. And here's what that means in practice: the more familiar AI becomes, the more fluent the outputs feel, the easier it is to stop questioning them.

On the surface AI outputs look pretty good; it takes real effort to scrutinise them sometimes.

The businesses that will win in the long run aren't necessarily the ones who adopted AI fastest. They're the ones who figure out where to build in friction. Where to stop and ask — does this actually make sense? What's missing that the AI can't see?

We’ve always challenged assumptions. We push back on briefs. We question the data. And now we’re using AI to help us with this process, not just to give us the answer. Our team of ‘Sparks’ AI personas helps us mimic the target audience of a campaign so we can stress-test messaging, strategy or creative before anything goes near a client or stakeholder.

The question isn't whether AI has a place in the workflow. It clearly does. The question is whether you're still in the driving seat — or whether your teams have quietly outsourced their brains to the robot without noticing.